Unlock the Editor’s Digest for free

Roula Khalaf, Editor of the FT, selects her favourite stories in this weekly newsletter.

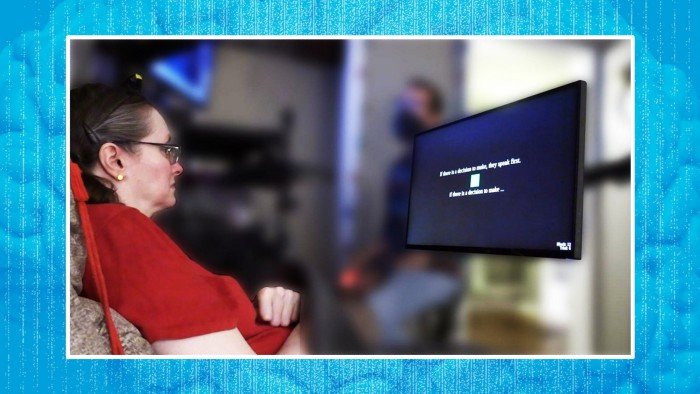

Scientists in California have developed what they say is the first brain implant capable of decoding and vocalising inner speech — words imagined in the head by people whose paralysis prevents them even attempting to speak.

Researchers had previously given a voice to people unable to speak by picking up signals in the brain’s motor cortex as they tried to make movements of mouth, tongue, lips and vocal cord. Now the team at Stanford University has managed to bypass the need to attempt physical speech.

“This is the first time we’ve managed to understand what brain activity looks like when you just think about speaking,” said Erin Kunz of Stanford, lead author of a paper describing the project in the journal Cell. “For people with severe speech and motor impairments, brain-computer interfaces [BCIs] capable of decoding inner speech could help them communicate much more easily and more naturally.”

Intensive research and development of BCIs is taking place in the private and academic sectors to enhance communication and mobility of people with disabilities. Investment is set to increase further following this week’s announcement of Merge, a new BCI venture backed by Sam Altman’s OpenAI to compete with Elon Musk’s Neuralink.

Four participants severely paralysed by amyotrophic lateral sclerosis (ALS) or brainstem stroke took part in the Stanford study. One could only communicate through his eyes, moving the pupils up and down for yes and right to left for no, said Kunz.

After electrode arrays from the BrainGate BCI consortium were implanted in their motor cortex, the brain area that controls speech, they were asked either to attempt to speak or to silently imagine a set of words. AI models were then trained to recognise patterns of neural activity associated with individual phonemes — units of speech — and knit them together into sentences.

Imagined speech produced patterns of activity in the motor cortex that were similar to attempted speech but with distinct differences. The signals from inner speech were weaker but sufficiently recognisable to give an accuracy rate of up to 74 per cent in real time.

Frank Willett, Stanford’s assistant professor of neurosurgery, said the decoding was reliable enough to demonstrate that, with improvements in implant hardware and recognition software, “future systems could restore fluent, rapid and comfortable speech via inner speech alone.

“For people with paralysis attempting to speak can be slow and fatiguing and, if the paralysis is partial, it can produce distracting sounds and breath control difficulties,” he said.

A significant discovery during the study was that the BCI could pick up some inner speech that participants were not instructed to imagine saying, such as numbers when they were counting shapes on a screen. That raised the issue of private thoughts leaking out against the user’s wishes.

To protect privacy, the Stanford team demonstrated a password protection system to prevent the BCI decoding inner speech unless the user unlocks it by imagining a password. In the study the phrase “chitty chitty bang bang” was 98 per cent successful at preventing involuntary decoding of private thoughts.

“This work gives real hope that speech BCIs can one day restore communication that is as fluent, natural and comfortable as conversational speech,” said Willett.